Taskflow helps you quickly write high-performance task-parallel programs with high programming productivity. It is faster, more expressive, fewer lines of code, and easier for drop-in integration than many of existing task programming libraries. The source code is available in our Project GitHub.

Start Your First Taskflow Program

The following program (simple.cpp) creates a taskflow of four tasks A, B, C, and D, where A runs before B and C, and D runs after B and C. When A finishes, B and C can run in parallel.

#include <taskflow/taskflow.hpp>

int main(){

auto [A, B, C, D] = taskflow.

emplace(

[] () { std::cout << "TaskA\n"; },

[] () { std::cout << "TaskB\n"; },

[] () { std::cout << "TaskC\n"; },

[] () { std::cout << "TaskD\n"; }

);

executor.

run(taskflow).wait();

return 0;

}

class to create an executor

Definition executor.hpp:62

tf::Future< void > run(Taskflow &taskflow)

runs a taskflow once

Task emplace(C &&callable)

creates a static task

Definition flow_builder.hpp:1562

Task & succeed(Ts &&... tasks)

adds precedence links from other tasks to this

Definition task.hpp:960

Task & precede(Ts &&... tasks)

adds precedence links from this to other tasks

Definition task.hpp:952

class to create a taskflow object

Definition taskflow.hpp:64

Taskflow is header-only and there is no struggle with installation. To compile the program, clone the Taskflow project and tell the compiler to include the headers under taskflow/.

~$ git clone https://github.com/taskflow/taskflow.git # clone it only once

~$ g++ -std=c++20 simple.cpp -I taskflow/ -O2 -pthread -o simple

~$ ./simple

TaskA

TaskC

TaskB

TaskD

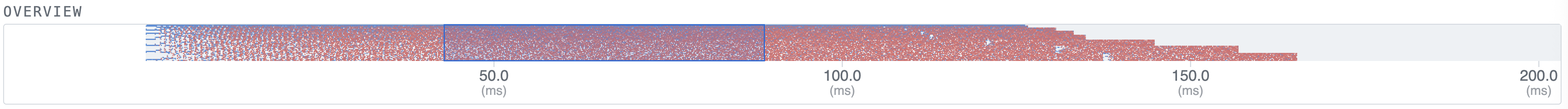

Taskflow comes with a built-in profiler, Taskflow Profiler, for you to profile and visualize taskflow programs in an easy-to-use web-based interface.

# run the program with the environment variable TF_ENABLE_PROFILER enabled

~$ TF_ENABLE_PROFILER=simple.tfp ./simple

# drag the generated tfp file to https://taskflow.github.io/tfprof/

Watch a quick-start video to learn Taskflow programming in just 5 minutes:

Create a Subflow Graph

Taskflow supports recursive tasking for you to create a subflow graph from the execution of a task to perform recursive parallelism. The following program spawns a task dependency graph parented at task B.

}).name("B");

class to construct a subflow graph from the execution of a dynamic task

Definition flow_builder.hpp:1726

class to create a task handle over a taskflow node

Definition task.hpp:263

Integrate Control Flow into a Task Graph

Taskflow supports conditional tasking for you to make rapid control-flow decisions across dependent tasks to implement cycles and conditions in an end-to-end task graph.

tf::Task cond = taskflow.

emplace([](){

return std::rand() % 2; }).name(

"cond");

Compose Task Graphs

Taskflow is composable. You can create large parallel graphs through composition of modular and reusable blocks that are easier to optimize at an individual scope.

tf::Task f1A = f1.

emplace([]() { std::cout <<

"Task f1A\n"; }).name(

"f1A");

tf::Task f1B = f1.

emplace([]() { std::cout <<

"Task f1B\n"; }).name(

"f1B");

tf::Task f2A = f2.

emplace([]() { std::cout <<

"Task f2A\n"; }).name(

"f2A");

tf::Task f2B = f2.

emplace([]() { std::cout <<

"Task f2B\n"; }).name(

"f2B");

tf::Task f2C = f2.

emplace([]() { std::cout <<

"Task f2C\n"; }).name(

"f2C");

Task composed_of(T &object)

creates a module task for the target object

Definition flow_builder.hpp:1612

const std::string & name() const

queries the name of the task

Definition task.hpp:1082

Launch Asynchronous Tasks

Taskflow supports asynchronous tasking. You can launch tasks asynchronously to dynamically explore task graph parallelism.

std::future<int> future = executor.

async([](){

std::cout << "async task returns 1\n";

return 1;

});

executor.

silent_async([](){ std::cout <<

"async task does not return\n"; });

class to hold a dependent asynchronous task with shared ownership

Definition async_task.hpp:45

void silent_async(P &¶ms, F &&func)

similar to tf::Executor::async but does not return a future object

tf::AsyncTask silent_dependent_async(F &&func, Tasks &&... tasks)

runs the given function asynchronously when the given predecessors finish

void wait_for_all()

waits for all tasks to complete

auto async(P &¶ms, F &&func)

creates a parameterized asynchronous task to run the given function

Leverage Standard Parallel Algorithms

Taskflow defines algorithms for you to quickly express common parallel patterns using standard C++ syntaxes, such as parallel iterations, parallel reductions, and parallel sort.

first, last, [] (auto& i) { i = 100; }

);

first, last, init, [] (auto a, auto b) { return a + b; }

);

first, last, [] (auto a, auto b) { return a < b; }

);

Task sort(B first, E last, C cmp)

constructs a dynamic task to perform STL-styled parallel sort

Task for_each(B first, E last, C callable, P part=P())

constructs an STL-styled parallel-for task

Task reduce(B first, E last, T &init, O bop, P part=P())

constructs an STL-styled parallel-reduction task

Additionally, Taskflow provides composable graph building blocks for you to efficiently implement common parallel algorithms, such as parallel pipeline.

if(pf.token() == 5) {

pf.stop();

}

}},

printf("stage 2: input buffer[%zu] = %d\n", pf.line(), buffer[pf.line()]);

}},

printf("stage 3: input buffer[%zu] = %d\n", pf.line(), buffer[pf.line()]);

}}

);

executor.

run(taskflow).wait();

class to create a pipe object for a pipeline stage

Definition pipeline.hpp:144

class to create a pipeflow object used by the pipe callable

Definition pipeline.hpp:43

class to create a pipeline scheduling framework

Definition pipeline.hpp:307

Run a Taskflow through an Executor

The executor provides several thread-safe methods to run a taskflow. You can run a taskflow once, multiple times, or until a stopping criteria is met. These methods are non-blocking with a tf::Future<void> return to let you query the execution status.

run_once.get();

executor.

run_n(taskflow, 4);

executor.

run_until(taskflow, [counter=5](){

return --counter == 0; });

tf::Future< void > run_until(Taskflow &taskflow, P &&pred)

runs a taskflow multiple times until the predicate becomes true

tf::Future< void > run_n(Taskflow &taskflow, size_t N)

runs a taskflow for N times

class to access the result of an execution

Definition taskflow.hpp:630

Offload Tasks to a GPU

Taskflow supports GPU tasking for you to accelerate a wide range of scientific computing applications by harnessing the power of CPU-GPU collaborative computing using Nvidia CUDA Graph.

__global__ void saxpy(int n, float a, float *x, float *y) {

int i = blockIdx.x*blockDim.x + threadIdx.x;

if (i < n) {

y[i] = a*x[i] + y[i];

}

}

}).name("CUDA Graph Task");

class to create a CUDA graph with uunique ownership

Definition cuda_graph.hpp:531

cudaTask copy(T *tgt, const T *src, size_t num)

creates a memcopy task that copies typed data

Definition cuda_graph.hpp:1075

cudaTask kernel(dim3 g, dim3 b, size_t s, F f, ArgsT... args)

creates a kernel task

Definition cuda_graph.hpp:1010

class to create an executable CUDA graph with unique ownership

Definition cuda_graph_exec.hpp:93

class to create a CUDA stream with unique ownership

Definition cuda_stream.hpp:189

cudaStreamBase & synchronize()

synchronizes the associated stream

Definition cuda_stream.hpp:232

cudaStreamBase & run(const cudaGraphExecBase< C, D > &exec)

runs the given executable CUDA graph

class to create a task handle of a CUDA Graph node

Definition cuda_graph.hpp:315

cudaTask & succeed(Ts &&... tasks)

adds precedence links from other tasks to this

Definition cuda_graph.hpp:418

cudaTask & precede(Ts &&... tasks)

adds precedence links from this to other tasks

Definition cuda_graph.hpp:407

Visualize Taskflow Graphs

You can dump a taskflow graph to a DOT format and visualize it using a number of free GraphViz tools such as GraphViz Online.

taskflow.

dump(std::cout);

void dump(std::ostream &ostream) const

dumps the taskflow to a DOT format through a std::ostream target

Definition taskflow.hpp:433

Supported Compilers

To use Taskflow v4.0.0, you need a compiler that supports C++20:

- GNU C++ Compiler at least v11.0 with -std=c++20

- Clang C++ Compiler at least v12.0 with -std=c++20

- Microsoft Visual Studio at least v19.29 (VS 2019) with /std:c++20

- Apple Clang (Xcode) at least v13.0 with -std=c++20

- NVIDIA CUDA Toolkit and Compiler (nvcc) at least v12.0 with host compiler supporting C++20

- Intel oneAPI DPC++/C++ Compiler at least v2022.0 with -std=c++20

Taskflow works on Linux, Windows, and Mac OS X.

Get Involved

Visit our Project Website and showcase presentation to learn more about Taskflow. To get involved:

We are committed to support trustworthy developments for both academic and industrial research projects in parallel and heterogeneous computing. If you are using Taskflow, please cite the following paper we published at 2022 IEEE TPDS:

More importantly, we appreciate all Taskflow Contributors and the following organizations for sponsoring the Taskflow project!

License

Taskflow is open-source the under permissive MIT license. You are completely free to use, modify, and redistribute any work on top of Taskflow. The source code is available in Project GitHub and is actively maintained by Dr. Tsung-Wei Huang and his research group at the University of Wisconsin at Madison.